Researchers Found Puberty Blockers And Hormones Didn’t Improve Trans Kids’ Mental Health At Their Clinic. Then They Published A Study Claiming The Opposite. (Updated)

A critique of Tordoff et al. (2022)

This post is a good example of the sort of in-depth social science criticism I can only do thanks to my premium subscribers. Please consider becoming one or telling a friend about this newsletter.

An article called “Mental Health Outcomes in Transgender and Nonbinary Youths Receiving Gender-Affirming Care” was published in JAMA Network Open late in February. The authors, listed as Diana M. Tordoff, Jonathon W. Wanta, Arin Collin, Cesalie Stepney, David J. Inwards-Breland, and Kym Ahrens, are mostly based at the University of Washington–Seattle or Seattle Children’s Hospital.

In their study, the researchers examined a cohort of kids who came through Seattle Children’s Gender Clinic. They simply followed the kids over time as some of them went on puberty blockers and/or hormones, administering self-report surveys tracking their mental health. There were four waves of data collection: when they first arrived at the clinic, three months later, six months later, and 12 months later.

The study was propelled into the national discourse by a big PR push on the part of UW–Seattle. It was successful — Diana Tordoff discussed her and her colleagues’ findings on Science Friday, a very popular weekly public radio science show, not long after the study was published.

All the publicity materials the university released tell a very straightforward, exciting story: The kids in this study who accessed puberty blockers or hormones (henceforth GAM, for “gender-affirming medicine”) had better mental health outcomes at the end of the study than they did at its beginning.

The headline of the emailed version of the press release, for example, reads, “Gender-affirming care dramatically reduces depression for transgender teens, study finds.” The first sentence reads, “UW Medicine researchers recently found that gender-affirming care for transgender and nonbinary adolescents caused rates of depression to plummet.” All of this is straightforwardly causal language, with “dramatically reduces” and “caused rates… to plummet” clearly communicating improvement over time.

The email continues:

“Gender-affirming care is lifesaving care,” said Arin Collin, a fourth-year medical student at the University of Washington School of Medicine, and one of the study authors. “This care does have a great deal of power in walking back baseline adverse mental-health outcomes that the transgender population overwhelmingly [experiences] at a very young age.”

The web version has a softer headline but maintains an eye-grabbing opening: “In study findings published in JAMA Network Open, the investigators reported that gender-affirming care was associated with a 60% reduction in depression and a 73% drop in harmful or suicidal thoughts among the participants. They received their care at Seattle Children’s Gender Clinic.” When we talk about a “reduction” or a “drop,” of course we mean “over time.”

UW’s publicity push included other media assets, including a video of Collin discussing the finding and a “Gender-Affirming Care for Adolescents soundbite log” Google document. In the log, Collin explains: “The results were very dramatic. We had a 56.7% baseline rate of depression, and a 43.4% baseline rate of suicidality – but receipt of any form of gender-affirming care, either or [sic] the puberty blockers or the gender-affirming hormones, was associated with a 60% reduction in depression and a 73% reduction in suicidality.” Again, anyone reading this will interpret Collin’s claim as “These kids had high baseline rates of depression and suicide, and then they were put on puberty blockers or hormones, and then those rates went down significantly.”

To drive the point home further, Collin posted the study to /r/science on Reddit with the headline “Transgender and nonbinary youth who received gender affirming medical care experienced greatly reduced rates of suicidality and depression over the course of 12 months.” The post has 13,400 upvotes and more than 1,700 comments, many of them remarking on what an important finding it is. Here Collin is plainly, indisputably arguing that the kids who went on GAM experienced mental health improvement during the study. (I reached out to Collin on Reddit but didn’t hear back.) Collin also posted it to the /r/medicine subreddit with a more neutral headline, but echoed that same claim in a comment: “The short version is that transgender and nonbinary youth who received gender affirming care (defined as either Leuprolide, Testosterone, or Estradiol) of any sort experienced a 60% decrease in baseline depression and a 73% reduction in baseline suicidality over the course of 12 months.”

It isn’t just the publicity materials; the paper itself tells a similar story, at least a few times. The “Key Points” box found to the right of the abstract reads, “In this prospective cohort of 104 TNB [transgender and nonbinary] youths aged 13 to 20 years, receipt of gender-affirming care, including puberty blockers and gender-affirming hormones, was associated with 60% lower odds of moderate or severe depression and 73% lower odds of suicidality over a 12-month follow-up.” The body of the paper also contains at least two sentences clearly claiming that the kids who went on blockers and hormones experienced improved mental health over time:

Our findings are consistent with those of prior studies finding that TNB adolescents are at increased risk of depression, anxiety, and suicidality and studies finding long-term and short-term improvements in mental health outcomes among TNB individuals who receive gender-affirming medical interventions.

…

Our study provides quantitative evidence that access to PBs or GAHs in a multidisciplinary gender-affirming setting was associated with mental health improvements among TNB youths over a relatively short time frame of 1 year. [endnotes omitted]

What’s surprising, in light of all these quotes, is that the kids who took puberty blockers or hormones experienced no statistically significant mental health improvement during the study. The claim that they did improve, which was presented to the public in the study itself, in publicity materials, and on social media (repeatedly) by one of the authors, is false.

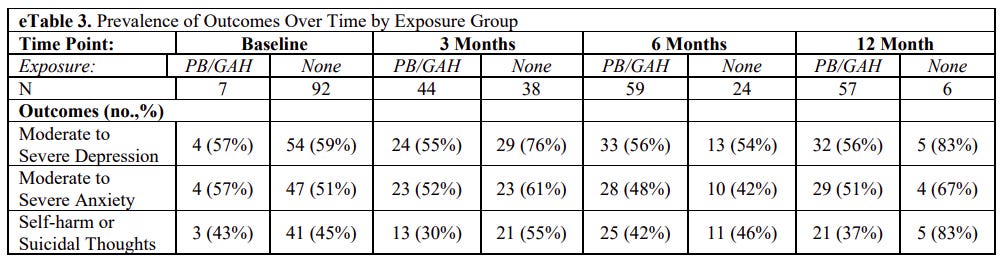

It’s hard even to figure this out from reading the study, which omits some very basic statistics one would expect to find, but the non-result is pretty clear from eTable 3 in the supplementary materials, which shows what percentage of study participants met the researchers’ thresholds for depression, anxiety, and self-harm or suicidal thoughts during each of the four waves of the study:

At each time point, “PB/GAH” refers to the kids who reported being on puberty blockers or gender-affirming hormones, while “none” refers to the kids who reported no such treatment.

Among the kids who went on hormones, there isn’t genuine statistical improvement here from baseline to the final wave of data collection. At baseline, 59% of the treatment-naive kids experienced moderate to severe depression. Twelve months later, 56% of the kids on GAM experienced moderate to severe depression. At baseline, 45% of the treatment-naive kids experienced self-harm or suicidal thoughts. Twelve months later, 37% of the kids on GAM did. These are not meaningful differences: The kids in the study arrived with what appear to be alarmingly high rates of mental health problems, many of them went on blockers or hormones, and they exited the study with what appear to be alarmingly high rates of mental health problems. (Though as I’ll explain, because the researchers provide so little detailed data, it’s hard to know exactly how dire the kids’ mental health situations were.)

If there were improvement, the researchers would have touted it in a clear, specific way by explaining exactly how much the kids on GAM improved. After all, this is exactly what they were looking into — they list their study’s “Question” as “Is gender-affirming care for transgender and nonbinary (TNB) youths associated with changes in depression, anxiety, and suicidality?” But they don’t claim this anywhere — not specifically. They reference “improvements” twice (see above) but offer no statistical demonstration anywhere in the paper or the supplemental material. I wanted to double-check this to be sure, so I reached out to one of the study authors. They wanted to stay on background, but they confirmed to me that there was no improvement over time among the kids who went on hormones or blockers.

That’s why I think the University of Washington–Seattle, JAMA Network Open, and the authors of this study are simply misrepresenting it. Anyone who reads their publicity materials, or who reads the study itself in a less-than-careful-manner, or who finds Collin’s Reddit posts, will conclude that this study found that access to GAM improved trans kids’ mental health outcomes over time.

But that simply didn’t happen.

How The Authors Are Able To Claim The Treatments Worked

The researchers can’t offer any specific statistical evidence that the kids who went on blockers and hormones improved over time. So instead, they claim that according to a statistical model they ran that supposedly adjusted for other, potentially confounding factors, the kids who went on GAM did better than the kids in the sample who didn’t. In the abstract, they write: “After adjustment for temporal trends and potential confounders, we observed 60% lower odds of depression (adjusted odds ratio [aOR], 0.40; 95% CI, 0.17-0.95) and 73% lower odds of suicidality (aOR, 0.27; 95% CI, 0.11-0.65) among youths who had initiated PBs or GAHs compared with youths who had not.” As the on-background researcher confirmed, this is an average of observations conducted at all time points in the study, not just a comparison between the baseline and 12-month observations.

“Mental health problems plummeted among kids who went on X” is a very different claim than “Kids who went on X didn’t experience improved mental health, but had better outcomes compared to kids who didn’t go on X.” That difference matters a great deal here. After all, in the present debate over blockers and hormones, a very common refrain is that these medications are so powerfully ameliorative when it comes to depression and suicide that it is irresponsible to deny or delay their administration to kids. It’s frequently argued that if kids don’t have access to this medicine, they will be at a high risk of killing themselves. I don’t know what this claim could possibly mean if it doesn’t mean that upon starting blockers or hormones, a trans kid with elevated levels of depression or suicidal ideation will experience relief. These researchers, firmly enmeshed in this debate, found that kids who went on these medications did not experience relief — and yet they don’t mention this worrisome fact anywhere in their paper.

Rather, they premise their argument on the fact that while the kids who went on GAM stayed stable, the kids who didn’t go on GAM got worse. As the on-background researcher explained in an email, “[W]hen we adjusted our models for receipt of PB/GAH [that is, puberty blockers and gender-affirming hormones] we saw that depression and suicidality significantly worsened among youth who had not (yet) started PB/GAH at 3 and 6 months relative to baseline levels.” So the action here, as the researcher admits, comes not from the kids in the treatment group getting better, but rather from the kids in the comparison group getting worse.

This brings us into very different territory, medicine-wise. Sure, there are some contexts where merely keeping someone stable might be considered a good outcome. If you give someone with stage IV cancer a drug and it keeps their tumors the same size for many months or even years, that’s often a win. If you give an Alzheimer’s patient a drug and it arrests their neurocognitive decline for a protracted period, that would be a win (I don’t think we even have any drugs like this). But the point of puberty blockers and especially hormones is to make kids better, and they didn’t get better in this study1. I’m not saying that improvement in a treatment group is necessary and sufficient for claiming a treatment works — you also need a control or comparison group (click the footnote for a brief explanation of what I mean here if this confuses you at all2) — but if you give someone medicine to treat something, and their symptom doesn’t improve, shouldn’t that cause you to question whether the treatment works?

The kids on GAM in this study didn’t get better over time. So if we’re going to accept the researchers’ claim that their paper offers evidence that GAM works, that requires that we accept that the differences in outcomes between the GAM and no-GAM groups were caused — or mostly caused — by the latter group not accessing GAM. But why should we? There are many other potential explanations for this pattern of results, and they’re mostly ignored by the researchers, with the exception of a couple points where they offer fairly generic assurances that they believe they have accounted for the possibility of omitted confounding variables.

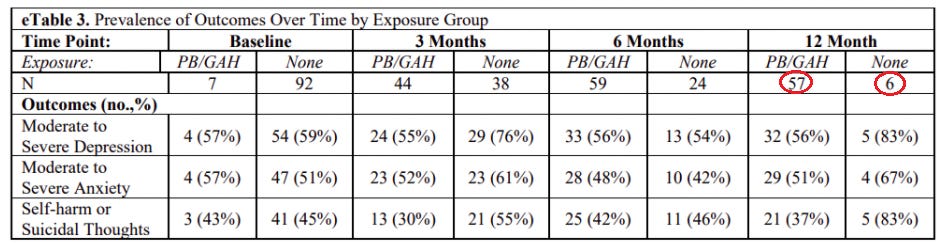

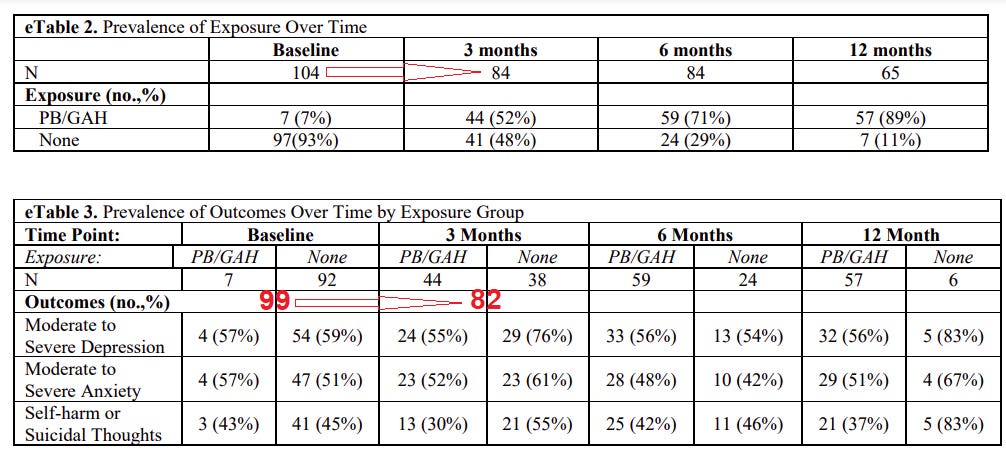

Despite the fact that two of the authors worked at Seattle Children’s Hospital, where the gender clinic is based, the paper doesn’t include a single word of even informed speculation attempting to explain why some kids accessed GAM and others did not. Nor do the authors seem to notice that by the end of the study, the no-GAM group has dwindled to a grand total of six kids who reported mental health data, as compared to 57 in the group receiving treatment.

To be clear, attrition in a study like this is normal. Inevitable. If you send out four waves of surveys, you’re going to have far fewer responses at the end than you did at the beginning. A much bigger problem is a significant difference in the rates of attrition between two groups: when one group falls out of the study at a much higher rate than the other. This “undermines the assumption that the program and control groups have the same measured and unmeasured characteristics at baseline,” as a helpful guide published by a pair of researchers at Mathematica, a top public policy analysis firm, puts it. “It can create an imbalance between the two groups if the characteristics of those who have follow-up data differ from those who do not.” (There isn’t a traditional “control” group in the JAMA Network Open paper, but the same logic about differential rates of attrition applies any time you’re comparing two groups in a manner that rests on an “all else is more or less equal” premise. If all else is far from equal, your comparison is broken.)

The vast majority of kids who dropped out of this study didn’t access GAM. By the end of the study, just 6/63 of the remaining kids who provided mental health data, or 9.5% of the study participants, were in the no-GAM group. Overall, according to the researchers’ data, 12/69 (17.4%) of the kids who were treated left the study, while 28/35 (80%) of the kids who weren’t treated left it3. This is a massive difference. (They’re a small group, but the no-GAM kids who were still in contact with clinic at the 12-month mark also had sky-high rates of moderate to severe depression and self-harm or suicidal thoughts, which might suggest something unusual about them as compared to everyone else in the cohort.)

(Deleted 'Update’ went here — see footnote4.)

Because the researchers don’t even mention this large difference in the two groups’ rates of attrition, all we can do is speculate. But it’s easy to see how it might affect their findings. Let’s say that a handful of the kids whose characteristics were measured at baseline weren’t particularly gender dysphoric, for example — maybe they were just going through a rough patch, or maybe their socially conservative parents took them to a gender clinic over “cross-gender” behavior but nothing was really “wrong” with them. It stands to reason these kids and their parents would lose touch with the clinic because they no longer required its services. If these kids did pretty well over the next year, that would mean you’re taking a handful of kids who would have represented pretty healthy members of the comparison group and tossing them out into the ether, nudging that group in a less-healthy-seeming direction on average. So when the on-background researcher tells me that “depression and suicidality significantly worsened among youth who had not (yet) started PB/GAH at 3 and 6 months relative to baseline levels,” it’s important to remember that every two out of the three new waves of the study, significant numbers of kids are dropping out — and overall the vast majority of the dropouts are on the “no GAM” side. If the dropouts had fairly good mental health, that could give the false appearance of the group “worsening” on average, even if individual members of the group aren’t actually worsening. (Imagine you have just two kids at the first wave, one suicidal and one not. If the non-suicidal kid drops out before the second wave and the suicidal kid remains suicidal and in the study, your group has “worsened” from 50% suicidal to 100% suicidal.) (Correction: I shouldn’t have said there are dropouts “every new wave of the study,” as I originally did. Between the second and third waves there aren’t any dropouts, and in fact the sample size in eTable 3 seems to go up one, perhaps due to a prior nonresponder now responding. But in the shift from 3 Months to 6 Months, about 14 member of the no-GAM group appear to flow into the GAM group, so it’s the same issue: When you say the no-GAM group has gotten worse, you’re talking about a group that is shrinking in size, and it seems unlikely that the kids who leave the study or switch to the GAM group are doing so randomly. Anyway, the correct way to describe the dropout pattern is that there are significant numbers of dropouts between the first wave and the second, and between the third wave and the forth — see eTables 3 and 4. I’m leaving the sentence up with one word struck through and the new, now-correct language in bold.)

On the flip side, what accounts for the handful of kids who didn’t go on medication, but who did stay in touch with the clinic for six months or a full year? Because, again, the researchers exhibit such incuriosity about the quirks of their own data, all we can do is speculate. One possibility is that these kids had severe or worsening mental health comorbidities that, in the eyes of the clinicians, needed to be brought under control before blockers or hormones could be administered. This is a fairly frequent occurrence in youth gender clinics.

Frequent enough, at least, that the proposed next version of the adolescent guidelines for the World Professsional Association of Transgender Health’s Standards of Care includes this recommendation:

[W]hen a TGD [transgender or gender-diverse] adolescent is experiencing acute suicidality, self-harm, eating disorders or other mental health crises that threaten physical health, safety must be prioritized. According to the local context and guidelines, appropriate care should seek to mitigate threat or crisis such that there is sufficient time and stabilization for thoughtful gender-related assessment and decision making. For example, an actively suicidal adolescent may not be emotionally able to make an informed decision regarding [sic, since the sentence ends here — in context, I think what’s missing here is something like “these treatments”]. If indicated, safety-related interventions should not preclude starting gender affirming care.

If even a small handful of the kids in the Tordoff study needed “sufficient time and stabilization for thoughtful gender-related assessment and decision making,” that could partially account for a cohort of kids experiencing mental health problems — maybe genuinely worsening ones, in some cases — and not receiving GAM. This would represent causality flowing in the exact opposite direction as what the researchers claim: Rather than it being the case that the kids’ mental health was bad because they didn’t access GAM, they didn’t access GAM because their mental health was bad.

We Can’t Check These Theories Because The Researchers Refuse To Share Their Data

I thought I’d at least be able to nibble around the edges of some of these questions based on my correspondence with Diana Tordoff. She’s a PhD student in epidemiology at UW–Seattle, listed as the corresponding author for the paper, and the one who “takes responsibility for the integrity of the data and the accuracy of the data analysis.” She’s the one who went on Science Friday to tout the importance of this study — more on that in a bit.

In a March 6 email, Tordoff wrote, “Although we provided the raw data in the supplement for transparency, I advise caution in interpreting these data as is.” Great, I thought; I could hand off the data to someone who is better at this stuff than I am and ask what they think. Except the data wasn’t actually included in the supplementary material. I asked Tordoff where it was. Radio silence. I sent a polite follow-up email. Again, nothing.

So I reached out to the media contact listed in all that material UW–Seattle had sent out and asked her if the data would be available. “Jesse, contacted the team, and they have no further comment at this time,” said the PR person. “They decided to let the methods section speak for itself.”

The problem is, there isn’t much of a methods section to speak of, so there are a lot of serious questions about the validity of this research — including whether the authors took the proper statistical approach at all.

The Researchers Might Not Have Used The Right Statistical Method

The authors of Tordoff et al. got their results using a method called generalized estimating equations. This is above my pay grade, stats-wise, so I reached out to a coauthor on what I believe is the single most-cited paper on this technique I could find, James Hanley. He’s a professor of epidemiology and biostatistics at McGill University who has taught many methods courses, and he agreed to look at the Tordoff et al. study and tell me what he thought. He generously marked up a copy of the paper with a bunch of his feedback and treated me to a one-on-one Zoom-tutoring session explaining what he found.

At the most basic, eyeballing-the-data level, Hanley agreed with me that the results communicated in the supplemental materials were damning for the researchers. If the treatments “were that good, the later percentages would be a lot lower,” he said of the percentages in the rightmost part of eTable 3. “Of course, the entire cohort was not surveyed at 12 months, so we cannot say how the entire group was faring at that timepoint, but of those that were questioned at the end, there is a substantial percentage that is reporting suicidal thoughts.” If these treatments did successfully address suicidality and depression, one would expect the corresponding numbers to have dropped considerably, which they didn’t.

But Hanley also said he simply thought the researcher chose a questionable technique for their analysis — one that obscures many potentially important features of their data, especially the dropout issues. He described generalized estimating equations as “quite a poor person’s way of dealing with [this sort of data].” “Today very few data-analysts would do this for longitudinal data, where you have four measurements on the same person,” he said. “There’s fancier ways of handling such data today.” Hanley thinks a so-called multilevel or hierarchical model would have been better. Also, “[I]nstead of dichotomizing the scores, leaving them as-is would have allowed us to see a curve for each participant.” I’ll explain this in more depth in the next section, but here Hanley means a curve showing each person’s or group’s mental health scores over time.

In this sort of analysis, said Hanley, “We would also see where the follow-up of each participant stopped, and where that person was at when they left. It might be that they left because they were feeling better, or because they felt that the treatment was not doing that much for them.” This would allow the researchers to more specifically “count who went up and who went down,” mental health–wise, he explained. “And you could probably even say, if they got treatment here, did they go down? So it's a lot more transparent to do things properly by these hierarchical models.”

Another problem Hanley noted is that 17 individuals in the sample provided only baseline data, subsequently dropping out of the study — and yet this data was included in the final model. Here’s where we risk getting slightly too in the weeds, and I don’t want to get lost, because I think many of the critiques of the Tordoff et al. study don’t rely on a particularly sophisticated understanding of statistics. But what Hanley is saying here is that the researchers’ model, which for the stats geek is this…

…draws significantly from these one-time observations. “That, to me, is major because any comparison they make now has a subtraction involving some people that aren’t there anymore and can only contribute to the baseline prevalences.” The researchers don’t explain why they include this data in the model, but Hanley’s point — that a bunch of people immediately drop out between waves one and two — is apparent from a glimpse at eTables 2 and 3. There are 20 immediate dropouts when it comes to the number of study participants who responded to the survey at all, and 17 when it comes to the number who provided responses on the mental health measures.

Overall, Hanley described generalized estimating equations as “a very crude way to deal with [this type of data], and kind of a naive way.” The technique “gives them great power to kind of make any comparison they want, and then talk about change — well, it is change, but they don't talk about change properly.”

The Problem With Variable Dichotomization

The dichotomization issue Hanley mentioned is extremely important.

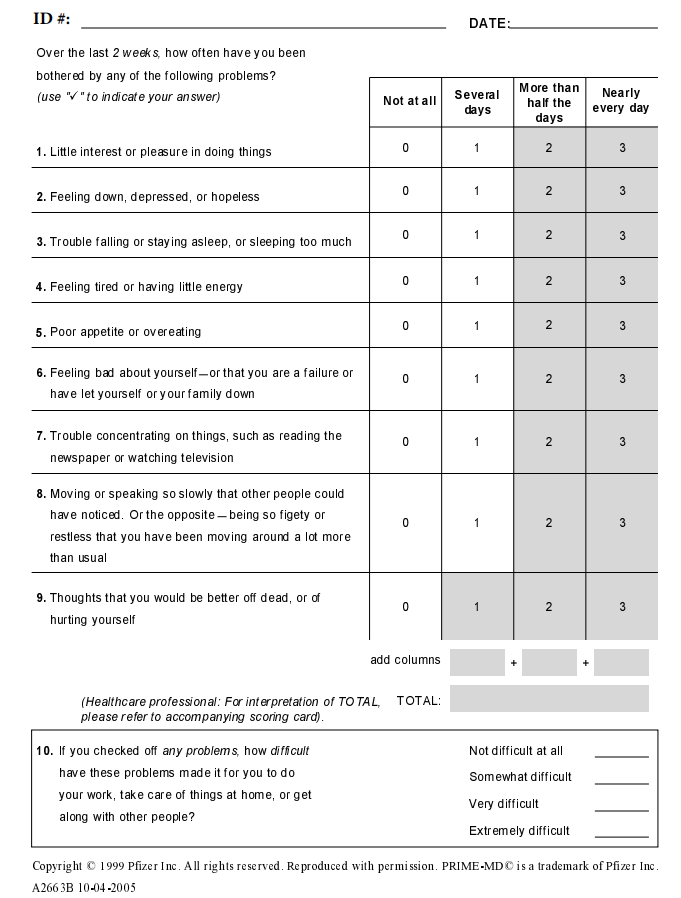

As I noted above, Tordoff et al. suffers from a strange lack of concrete statistical information. Let’s focus on the main instrument the researchers used to gauge depression and suicidality, the so-called Patient Health Questionnaire 9-item, or PHQ-9, which consists of nine items plus a final item that isn’t factored into the score, and which is filled out as follows (or click here):

The lowest possible score is zero (9 x 0), and the highest possible score is 27 (9 x 3). Rather than report on the average values participants in the study reported, the researchers dichotomized this data — they turned it into a variable that has only two values, yes or no (or 1 or 0, if you like). If you score 10 or higher, you’re coded as a yes, meaning you have “moderate or severe depression.” Lower than 10, you’re coded as a no, meaning you do not have “moderate or severe depression.” The researchers also dichotomized suicidality, which is covered by the ninth item — whether the respondent has experienced “Thoughts that you would be better off dead, or of hurting yourself” and which is scored from 0 to 3 based on how frequently the respondent said they felt that way in the last couple weeks. If they get a 1, a 2, or a 3, they’re considered a yes regarding self-harm or suicidal ideation; if they get a 0, they’re a no.

This strips out a lot of useful information. For example, in the Tordoff et al. study, someone who scores a 27 on the PHQ-9 — an almost unfathomable level of distress — is viewed as having the same mental health status as someone who scores a 10. If you read through the above items, it’s easy to imagine that someone who scores a 10 is having a bit of a difficult time, but is still pretty functional. Not so for folks at the higher ranges. Most PHQ-9 scores, in this study, count as a yes, you have depression problems.

The researchers don’t report on their participants’ mental health in a careful, detailed way, save for one table that provides a breakdown of what percentage of participants were in each “bucket” of minimal, mild, moderate, moderately severe, and severe depression at baseline (which, again, includes 17 readings of kids who were subsequently lost to all follow-up, so it’s unclear what we should do with them). You won’t even find the average PHQ-9 score at baseline anywhere in the paper or in the supplementals, let alone the scores at various waves of data collection, or broken down by GAM/no-GAM. The reported odds ratios refer simply to the probability of someone in the study getting a 10 or higher on the PHQ-9, a 1 or higher on the suicide question, or a 10 or higher on an anxiety scale (I’m mostly ignoring that one because the researchers report a null finding there, anyway). Compare this lack of basic data to how a group of Dutch researchers reported their findings in an important 2014 study of youth medical transition. In that paper, the authors provided clear information about the average outcome scores at each wave of data collection. There’s no mystery about exactly what the numbers are.

There are many downsides to the statistical bluntness and lack of transparency in the Tordoff et al. study. For example, by the study’s method, a kid who scores a 24 on the PHQ-9 at baseline and a 15 three months later hasn’t improved at all — he is “moderately or severely depressed” at both waves — whereas a kid who scores a 10 at baseline and a 9 three months later has graduated from “moderately or severely suppressed” to “not moderately or severely depressed.” A one-point decrease can be much more important than a nine-point one. This is a nice advertisement for the limits of variable dichotomization.

To be sure, sometimes dichotomized variables are useful. Everywhere in medicine you have to establish cutoff points simply to determine, or help determine, who “officially” has a condition, is eligible for treatment, and so on. But to publish an entire paper based in part on a 27-point scale and offer no insights into how the treatment versus control comparison groups fared, other than whether or not they scored a 10 or higher on that scale — I really think it suggests the researchers are hiding something, that they ran other analyses, didn’t find what they wanted to find, and then chose a very crude method (to borrow Hanley’s adjective) that allowed them to “show” that a treatment they favor “works.”

One potential hint that this might be the case is that at no point do the authors show that they checked whether their findings are robust to different thresholds. As they note, the PHQ-9’s 27-point scale has other cutoff points, such as 15 (moderately severe) and 20 (severe). If you’re going to stick solely to dichotomized measures, why not at least report what happens when you apply those different cutoffs to the same model? I bet the answer is that usually, they got nothing — no evidence the GAM group did better than the no-GAM group.

It’s definitely possible I’m being uncharitable here. A lot of this would be easy to check if the researchers would provide the data. But because they won’t, and because they didn’t even summarize it anywhere, we’re forced to guess about why they made the choices they did.

One Of The Researchers Responds

We’ll likely never know for sure, but I would wager that the mental health differences observed between the GAM and no-GAM groups were mostly caused by factors other than receipt of puberty blockers and hormones. I’m basing that theory on the fact that the groups appear to be quite different, given the massive difference in their respective dropout rates. Plus, the entire sample had data taken at a gender clinic, meaning that for the minors, their parents consented to bring them there and have them evaluated. They were in a pretty good position to get puberty blockers or hormones if they wanted them and if the doctors agreed it was the right move.

Someone made similar arguments in the online comment system on the JAMA website, and Tordoff responded (click “comments” if that link doesn’t work). First, she writes that at Seattle Children’s Gender Clinic, kids who wanted to go on GAM (and who had parental approval if they were minors) were generally put on it regardless of their mental health status:

In practice, the only instances when it would have been appropriate to delay initiation of PB/GAH is if there was a concern that a patient did not have the capacity to provide informed consent (which is exceedingly rare in adolescence). Therefore, youth who reported moderate to severe depression, anxiety, or suicidal thoughts were not precluded access to PB/GAH, especially since initiating PB/GAH is known to improve or mitigate these symptoms.

These are somewhat tangential points, but it’s interesting that Tordoff claims that “initiating PB/GAH is known to improve or mitigate these symptoms,” when the whole point of her study was to investigate this question, and when she and her team found that these medicines didn’t do that. I’m also genuinely curious what percentage of youth gender clinicians would agree with her that it is “exceedingly rare” an adolescent can’t provide meaningful informed consent for GAM — a lot of the ones I’ve spoken with have said this comes up with some frequency, especially in cases involving serious mental health comorbidities. (Tordoff somewhat misconstrues the new World Professional Association for Transgender Health guidelines elsewhere in her comment, in my opinion, but I’ll relegate that point to a footnote5.)

Either way, Tordoff is saying that the clinic usually didn’t view severe depression or suicidality as a reason to slow down the administration of hormones. Plus, she said, “we have empirical evidence that there were not significant differences in the prescription of PB/GAH by baseline mental health”:

We observed that a similar proportion of youth with poor mental health symptoms at baseline received PB/GAH during follow-up compared to youth who didn’t report these symptoms. Specifically, there were no significant differences in receipt of PB/GAH during our study follow-up period among youth with and without moderate to severe depression (64% v. 76%, p-value = 0.234); with and without moderate to severe anxiety (67% v. 71%, p-value = 0.703); and with and without self-harm or suicidal thoughts (62% v. 75%, p-value = 0.185) at baseline. Although we observed that youth with poor mental health at baseline were slightly less likely to be prescribed PB/GAH during our study, these difference[s] were small in magnitude (4-13 percentage points) and were not statistically significant.

The researcher who wanted to stay on background also said youth with and without mental health problems had “similar retention in the study,” meaning the differential rates of attrition shouldn’t be of much concern, although they didn’t provide any data backing up this claim. Assuming all this is true — that the clinic does readily administer blockers and hormones with little regard to the mental health status of its patients, and that there were no significant differences on the attrition front — it would at least dent my claim that mental health problems might be causing a lack of access to GAM in this study, rather than the reverse.

The problem is, the researchers offer no data in their study or supplementals showing any of this — and Tordoff’s informal data point in the direction we’d expect if my theory were true, that the more mentally ill kids were less likely to go on treatment! The differences she cites are by no means tiny — 12 percentage points for depression and 13 percentage points for self-harm or suicidal thoughts (the smaller one, 4 percentage points, applies to anxiety, where the researchers aren’t claiming to have found anything).

Tordoff points out that these differences aren’t statistically significant, which is true, but all that means is they’re above the arbitrary p < .05 threshold. A statistically insignificant effect doesn’t mean no effect exists — rather, it means that one can’t conclusively exclude the possibility that any apparent difference is the result of random chance. In this case, the textbook definition of a p-value should cause us to conclude that there’s about a 23% chance we’d observe a difference at least as large as 12 percentage points in the rates of depression between kids who did and didn’t go on hormones, and about a 19% chance we would observe a difference at least as large as 13 points between the suicidality rates of the kids who did and didn’t go on GAM, if there were no genuine relationships between these variables. So this by no means debunks the theory of serious, unaccounted-for baseline differences between the two groups.

Even if we adopt an extremely charitable stance toward Tordoff’s argument here and assume that there’s no evidence in her data of a connection between mental health status and whether or not a patient accessed GAM, remember that she and her colleagues are using very blunt yes/no measures of depression and suicidality — for both measures, the majority of points on the scale in question is treated as a yes rather than a no (specifically, scores from 10 to 27 are treated as a yes on the 0-to-27 depression scale, and scores from 1 to 3 are treated as a yes on the 0-to-3 suicide item). So even if it’s the case that the researchers didn’t observe a statistically significant difference in the percentage of two groups that reached the Moderate threshold, that doesn’t mean there weren’t important differences between these groups. In fact, almost every methodological choice made by the researchers would have the effect of blurring any differences that did exist.

To drive this point home, let’s imagine an intentionally extreme scenario involving just two very different kids in the study, both natal females:

Kid A, 12 years old, scores a 10 on the PHQ-9, doesn’t have gender dysphoria, and was dragged to the clinic because she has socially conservative parents unhappy she cut her hair short and wants to wear “boy” jeans. Her parents, upon being told there’s nothing “wrong” with their kid other than some (barely “moderate”) depression — perhaps exacerbated by her parents’ attempts to exert so much control over her attire and fashion — don’t bring her back to the gender clinic.

Kid B, also 12 years old, scores a 22 on the PHQ-9, has severe, crippling gender dysphoria and has already socially transitioned — he is a trans boy. He is quickly given an “official” diagnosis of gender dysphoria and started on puberty blockers, and he and his parents stay in contact with the clinic for the next three waves of data collection.

By the researchers’ own methods, these two kids would be seen as having “similar” levels of depression, and this dyad would contribute to the conclusion that there were no significant differences between kids who did and didn’t go on hormones or blockers, and who did versus didn’t drop out! But of course these are wildly different situations, and of course there are a lot of differences here being obscured by a model stuffed with yeses and nos, with 1s and 0s.

Again, I’m speculating. But my point is that if the researchers’ method can’t even differentiate between these two kids in a hypothetical, intentionally extreme scenario, of course it’s possible that other, less profound differences are being obscured. At-least-moderately depressed kids in the study are 12 percentage points less likely to go on GAM as compared to kids who don’t meet that threshold. What’s the size of that difference if we bump that threshold up a few PHQ-9 points? More to the point, what is the difference in the average PHQ-9 scores of these two groups? It’s an eminently reasonable question that anyone should be able to answer simply by reading the paper itself. “Are the two groups we’re comparing actually similar?” is a first-two-weeks-of-Stats-101-level question. And yet the answer is nowhere to be found in this paper — it’s missing, like so much other basic information6.

Part Of The Problem Is That Even High-Quality Media Outlets Don’t Fact-Check Studies Like This One

I’ve already written about the abysmal job that mainstream media is doing covering youth gender medicine and don’t want to simply recite those points again, but it’s a very bad sign that this study was touted on Science Friday in the way that it was:

[Host] IRA FLATOW: Diana, can you walk me through what you found in this study about trans and nonbinary kids and teens?

DIANA TORDOFF: Absolutely. Thank you for having us here today. Our study was conducted at Seattle Children’s Hospital’s Gender Clinic. And our goal was to prospectively follow youth during their first year of receiving care to better understand their experiences, their well-being, as well as barriers they faced in accessing care. So we enrolled 104 trans youth, who are aged 13 to 20. And we found that youth who received puberty blockers or gender-affirming hormones were 60% less likely to be depressed and 73% less likely to have suicidal thoughts, compared to youth who did not receive these medications.

IRA FLATOW: That’s a huge difference.

DIANA TORDOFF: It’s huge.

…

IRA FLATOW: And major medical associations support gender-affirming care, right?

DIANA TORDOFF: Absolutely. These medications are both safe. And their use in adolescents is supported by a large number of medical and professional societies, including the American Academy of Pediatrics and the American Medical Association.

That’s it. The study found a “huge difference.” No follow-up questions, such as why the kids who went on blockers and hormones in Tordoff’s sample didn’t get better. I wouldn’t be surprised if it turned out that no one at Science Friday even read the study in full.

Tordoff also describes blockers and hormones for youth as “safe,” without any further elaboration or detail. This is an exaggeration of our present state of knowledge in this area. “Any potential benefits of gender-affirming hormones must be weighed against the largely unknown long-term safety profile of these treatments in children and adolescents with gender dysphoria,” said the UK’s National Institute for Health and Care Excellence in 2020, alongside a companion report on puberty blockers that found scant quality evidence of their safety and efficacy as well. The so-called Cass Review, another important UK effort geared at summarizing the data and steering the future course of youth gender treatment there, which was released just weeks ago in interim form, noted that “The important question now, as with any treatment, is whether the evidence for the use and safety of the medication is strong enough as judged by reasonable clinical standards.” As in, it’s still an open question. The report also notes that “Short-term reduction in bone density is a well-recognised side effect [of puberty blockers], but data is weak and inconclusive regarding the long-term musculoskeletal impact.”

In Sweden and Finland, health authorities are sufficiently concerned about the lack of evidence that it’s safe and effective to give puberty blockers and hormones to gender dysphoric minors that they have significantly scaled back the administration of these treatments. According to an unofficial translation of Finland’s new guidelines, announced in 2020, “puberty suppression treatment may be initiated on a case-by-case basis after careful consideration and appropriate diagnostic examinations if the medical indications for the treatment are present and there are no contraindications.” When Sweden followed suit, instituting new restrictions of its own just a month and a half ago, its National Board of Health and Welfare noted that “There are no definite conclusions about the effect and safety of the treatments,” per Google Translate. Sweden’s move was animated in part by an alarming alleged scandal — this public television documentary argues that doctors and administrators at a major hospital there appeared to cover up serious adverse effects, including a spinal injury and suicidality, among kids who had undergone these treatments (I interviewed the lead presenter here but it’s paywalled).

There isn’t a whiff of any of this in the JAMA Network Open paper, nor in the Science Friday coverage — you’d think there’s simply no debate here whatsoever, that the science is settled, when in fact the controversy has grown so heated that major European healthcare systems have changed their policies on these treatments. Maybe they’re wrong to have done so, but it’s quite surprising to see an outlet like Science Friday completely ignore any of this, and to see clinicians so uninterested in the controversy and so willing to gloss over warning signs in their own data.

It’s similarly surprising that JAMA Network Open would allow researchers to claim that a treatment was correlated with improvement over time in the mental health of a group of young people when it wasn’t. I reached out to the media contact there, as well as the journal’s top two editors, Frederick Rivara and Stephan Fihn, both of whom are at the University of Washington–Seattle, but I didn’t hear back.

I think the political environment is exacerbating things, unfortunately. At the state level, Republicans are seeking out genuinely bad and harmful policies. In Texas, what Greg Abbott and Ken Paxton are trying to do can only be described as state-sanctioned child abduction. It’s horrific. So part of what’s going on is there’s a race to prove just how wrong these politicians are, and to produce evidence showing the treatments work. As Tordoff and her colleagues put it at the end of their paper, “Our findings have important policy implications, suggesting that the recent wave of legislation restricting access to gender-affirming care may have significant negative outcomes in the well-being of TNB youths.” This is, again, a paper that found that kids who went on blockers and hormones did not improve. The science has become completely intertwined with the politics.

But the question of whether the GOP is off the rails on this issue (absolutely) and whether its policies will hurt kids who should go on blockers and/or hormones (absolutely) is different from the question of whether we should maintain vigilance and call out half-baked science when it comes to the literature on youth gender medicine (again: absolutely). Adolescent mental health and suicide research, in particular, is a vitally crucial area of science, and we should hold it to high standards. If we can’t agree that it’s wrong and potentially harmful to distort research on these subjects, what can we agree on?

Questions? Comments? The raw data from Tordoff et al. (2022)? I’m at singalminded@gmail.com or on Twitter at @jessesingal. The daytime image of Seattle Children’s Hospital is from here.

I guess technically with puberty blockers, “it’s complicated.” There’s a lot of heated debate over the claim that they are administered to “buy time” for a kid and their family to figure out if hormones are the right choice. If that’s the case, I don’t think stable mental health, rather than improved mental health, would necessarily be a knock on them. But if that’s true, no one should claim blockers ameliorate suicidality, which plenty of people do. It’s sort of a non sequitur in the case of this study, anyway, since, as per Table 1, so many more of the kids went on hormones (61.5%) than blockers (18.2%), meaning the study serves as an evaluation of the former much more than the latter.

Let’s say you want to test a new flu medication. You find 50 patients who have the flu and invite them into your medical clinic. There you get some baseline information about their symptoms, give them all the medication, and then check back a week later. Look at that — on average, they’ve improved significantly!

Unfortunately, that’s really only the first step to proving a medical treatment works. It’s necessary but not sufficient. There could be other reasons your patients improved. Flu symptoms often abate on their own in time, for one thing. For another, placebo effects can be powerful: Strange as it sounds, it wouldn’t be unprecedented if simply walking into a medical clinic and being seen by a doctor itself exerted a mysterious, salutary effect on the patients’ health.

To account for these other possibilities — that it wasn’t the drug, or wasn’t just the drug, that caused the improvement — you need a control group. The gold-standard version of such an experimental setup would be to find 100 people with the flu, and then divide them randomly into two groups of 50, each of them equally sick, on average. You treat them identically with the clinic visit, symptom measurement, and so on, with the only exception being half of them get the actual treatment and half of them get a sugar pill or some other sham treatment we wouldn’t expect to have any impact on the flu.

If, after that much more onerous procedure is followed, you find a statistically significant difference in the flu symptoms between the treatment and sham groups, then you’ve taken a pretty major step toward proving your treatment works. Improvement alone doesn’t cut it, at least as far as gold-standard science is concerned. But if you don’t even detect improvement, that’s a big problem!

Where I’m getting these numbers from:

The vast majority of kids who dropped out of this study didn’t access GAM. By the end of the study, just 6/63 of the remaining kids who provided mental health data, or 9.5% of the study participants, were in the no-GAM group. Overall, according to the researchers’ data, 12/69 (17.4%) of the kids who were treated left the study, while 28/35 (80%) of the kids who weren’t treated left it.

In order:

6: eTable 3, 12 Month / None

63: = 6 + 57, eTable 3, 12 Month / PB/GAH + None

12: = 69 - 57, see below for 69 and 57 is from eTable 2, 12 months / PB/GAH

69: - Page 1, "By the end of the study, 69 youths (66.3%) had received PBs, GAHs, or both interventions"

28: = 35 - 7, see below for 35 and 7 is from eTable 2, 12 months / None

35: Page 1, “while 35 youths had not received either intervention”

I originally put a slightly panicky “Update” here because I managed to convince myself there was a potential error in my analysis of the attrition rates. This turned out to be a false alarm — my numbers are correct. I didn’t want to leave a paragraph-long update about what turned out to be a non-issue in the body of the piece, so I’ll stick it below for transparency’s sake, followed by just a bit more detail about the numbers in this section and Footnote 3 for the true obsessives.

The update’s original language:

(Update, evening of 4/6/2022: A couple people pointed out that I might be overestimating the magnitude of this difference by failing to account for kids who leave the “None” group not because they are dropping out of the study but because they switched to the “PB/GH” group. I spent a couple more hours banging my head against eTable3 to little avail this afternoon and evening — because the researchers provide so little concrete data, it’s very hard to figure out exactly what’s going on with regard to who is leaving versus crossing over to the other group. I’m asking a couple other people to check my work on this for me and once I hear back from them, if need be I’ll update or correct the figures in this paragraph and the footnote. It would take something very strange in the data for this to substantively affect my argument about differential rates of attrition — basically, it would need to be the case that a surprising number of kids switched from the None group to the PB/GAH group between 6 months and 12 month, and that a large number of kids already on blockers or hormones dropped out of the study during that same span. I think a large difference in dropout rate is a much more likely explanation, but, again, I’ll circle back when I’ve checked this more thoroughly. Just wanted to get an initial Update published for transparency’s sake.)

I’m not sure how I managed to convince myself something was afoot here. Calculating the different rates of attrition is pretty easy. In the prior footnote, I just used eTable 2, specifically the 12-month data…

…plus this language from the body of the article: “By the end of the study, 69 youths (66.3%) had received PBs or GAHs… while 35 youths had not received either PBs or GAHs (33.7%) (eTable 3 in the Supplement).”

At the final wave of data, we don’t really need to worry much about who switched groups versus dropped out, because we’re left with a simple calculation. The total number of kids who went on GAM — the kids still in the study at 12 months plus the ones who dropped out — has to add up to 69. The number still in the study is 57, so there are 12 dropouts. Similarly, the total number of kids who didn’t go on GAM — the kids still in the study at 12 months plus the ones who dropped out — has to add up to 35. The number still in the study is 7, so there are 28 dropouts. These are the numbers I originally presented and I have no how I managed to freak myself out about this.

Tordoff writes of the clinic’s policy of administering puberty blockers and hormones even to severely suicidal, severely depressed kids, since it is “exceedingly rare” these conditions pose major obstacles to a minor giving true informed consent:

These practices are consistent with updated guidance from the draft WPATH SOC8 guidelines, which state that for youth experiencing acute suicidality, self-harm, or other mental health crises, “safety-related interventions should not preclude starting gender-affirming care” and that “while addressing mental health concerns is important, it does not mean that all mental health challenges can or should be resolve completely[.]”

I think she’s selectively cropping from the guidelines, leaving out the fact that the SOC8 includes plenty of cautions about the importance of making sure mental health problems are well-managed prior to the administration of blockers or hormones. To repeat the paragraph I referenced further up in this piece:

First, when a TGD [transgender or gender-diverse] adolescent is experiencing acute suicidality, self-harm, eating disorders or other mental health crises that threaten physical health, safety must be prioritized. According to the local context and guidelines, appropriate care should seek to mitigate threat or crisis such that there is sufficient time and stabilization for thoughtful gender-related assessment and decision making. For example, an actively suicidal adolescent may not be emotionally able to make an informed decision regarding [these treatments]. If indicated, safety-related interventions should not preclude starting gender affirming care.

There’s some hedging in the paragraph, but I don’t know how anyone could read it and come away thinking that the SOC8 thinks that severe suicidality and depression aren’t good reasons to potentially slow down major medical decisions, contrary to what Tordoff claims is going on at Seattle Children’s Gender Clinic. (I do wonder whether the clinic’s policies might have been more conservative in 2017 and 2018, when this group of kids came through, but we’re already deep into Footnoteistan and there’s no need to wander even further off-course…).

In one of her online comment responses, Tordoff writes that “our study design did not compare two cohorts…. Rather, we examined temporal trends within a single cohort of youth with a time-varying exposure variable.” Okay, but at the end of the day, the results they published were generated by comparing the probability of one group versus another experiencing various outcomes, so I don’t think there’s anything wrong with harping on this question of whether those two groups were similar enough for any such comparisons to be meaningful.

Damn, THIS is what I'm paying for.

Thanks, Jesse, for doing the work that researchers and reviewers obviously aren't willing to do.

I bet you're often feeling like you're fighting against windmills, but you're doing stellar and really important work.

That's how it's done - not by mindlessly accusing everyone you disagree with of being a "groomer".

Man the failure to acknowledge drop-outs is SO terrible. You didn't even mention the biggest problem with it - why exactly would a not-depressed person who does not want blockers go to a gender clinic? This is BY FAR the biggest problem in my eyes. Like take the situation the authors seem to be describing, one where no amount of distress keeps you off blockers. In that situation you would logically expect anyone who wants blockers to end up on blockers, which just leaves the people who don't want blockers in the non-blocker group. If you are in the non-blocker group AND experiencing no distress the clinic is providing you literally zero services of value - it's not therapy because you don't need therapy it's not any medical intervention because no intervention is needed - so OF COURSE they dropped out!