A (Sorta) Quick Response To The Errors In Emily Gorcenski's Article, "Jesse Singal Got More Wrong Than He Thinks" (Updated)

Round and round we go

If you find this sort of thing interesting, please consider becoming a paid subscriber to this newsletter, and DOUBLE-please (if that’s a thing) consider preordering my book, The Quick Fix: Why Fad Psychology Can't Cure Our Social Ills which is out Tuesday, April 6, and which is about (ahem) overhyped and misleading behavioral-science claims.

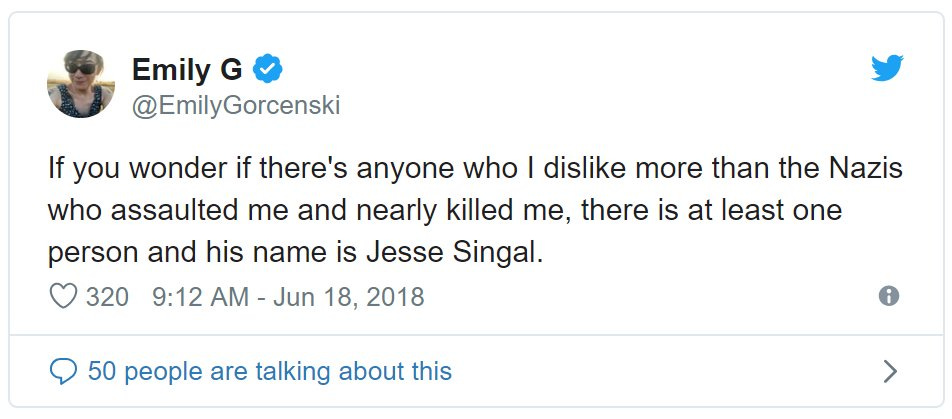

It’s probably a bad idea to respond to an article by someone who, after being literally, physically assaulted by a racist mob in Charlottesville, subsequently wrote that I’m the only person who she hates more than her attackers.

That said, the internet is a stupid place and a couple people pointed me toward a piece by Emily Gorcenski that seems to be gaining some traction on Twitter.

It mostly centers on a key 2013 study by Thomas Steensma and his colleagues at the Center of Expertise on Gender Dysphoria at Amsterdam University Medical Center, as well as a NY Mag article I wrote that touched on that article, and a subsequent Medium post noting an error I made in reporting on the paper. Steensma’s clinics is one of the most important gender clinics in the world, and it has produced some pathbreaking research I have referenced a bunch of times. Gorcenski’s piece is about the ‘desistance’ debate in particular — the question of how often gender dysphoria in young people abates on its own in time, without social or medical transition. I’m not going to provide an overview here but if you’re interested in more on the basics you can read this or this or this. It does get fairly dense.

Gorcenski’s piece makes some pretty ridiculous errors, as you’ll see — errors she would not have made if she had read the source material closely — but I don’t want to throw the baby out with the bathwater. She also noticed some statistical wonkiness in the Steensma paper that is worth someone with more data chops than I looking into. I don’t get the sense Gorcenski finds any of said wonkiness that fishy or suggestive of backbreaking quantitative problems with the paper (though she does criticize it on other grounds), but still: I believe she’s provided enough information for the pros (or the competent amateurs) to try to reproduce the weirdness she said she found, and they should attempt to do so. If anyone follows up on this and emails me I’m happy to connect you with Steensma directly (he’s also not a hard guy to get a hold of so I’d Google for his email address before reaching out to me directly).

This is not going to be a comprehensive response to all of Gorcenski’s claims — just four factually wrong ones. I disagree with a lot more about her analysis but just do not have the time or energy to address those points here. I’d prefer to just focus on these instances of intense sloppiness and basic misunderstanding.

Error 1

Gorcenski writes:

The Steensma paper studied 127 people who entered the Center of Expertise on Gender Dysphoria at the Vrije Universiteit (VU) University Medical Center in Amsterdam. This sampling represents 127 people out of 225 who were referred to the clinic between 2000 and 2008; inclusion criteria for the study included those who were at least 15 years of age at the time of follow-up, and follow-up occurred between 2008 and 2012. In Table 1 in the report, the data are broken down into two distinct groups: "Persisters" (n = 47) and "Desisters" (n = 80). This is the first mistake, as this dichotomy is false. The Desister category includes six responses by parents (as opposed to study subjects) and twenty-eight nonresponders. Simply put, the responses from these subgroups cannot be relied upon, and anyone with basic clinical study experience should know that loss to Follow-up is not the same thing as a negative response.

Two years after Singal relied on this analysis to shape his narrative in The Cut, he was forced to accept that the reasoning was faulty. He wrote a follow-up Medium post, and his article in The Cut was updated (although no time and date for the update is provided). Despite this correction, Singal's conclusions are not changed to reflect this methodological misunderstanding.

[...]

Singal was able to admit one of his mistaken interpretations of the Steensma et al data… Despite this admission, he fails to retract the conclusions he drew from his faulty analysis.

This is a complete misunderstanding of my error that I have debunked repeatedly — most recently just a couple weeks ago, when I responded to GLAAD’s decision to add me to a later-sorta-retracted list of anti-LGBT bigots in part on the basis of this misunderstanding (GLAAD piggybacked off The Daily Dot and Jezebel, both of which made the exact same mistake — this has been going on for years). So I’ll just link to that post and direct you to item (2).

The short version: I always acknowledged the nonresponse problem, including in my 2016 article. The error I acknowledged in 2018 was overestimating the number of true non-responders in the Steensma study and therefore underestimating the strength of the study. When you account for my error, the evidence for desistance gets stronger. “Despite this correction, Singal's conclusions are not changed to reflect this methodological misunderstanding” is a deeply misleading claim, because all this adjustment did was bolster the evidence for desistance being common, which is the argument I make in the NY Mag piece in the first place! There’s nothing much to change, conclusions-wise, but anyone who reads Gorcenski’s piece (or the GLAAD thing, or The Daily Dot, or Jezebel…) will think that I noticed an error that should have caused me to reconsider my conclusion but then failed to do so, which would in fact have been journalistically unethical. But the opposite occurred! It’s insane I keep having to type this. Why would I ‘retract’ the claim that the evidence suggests desistance is common upon finding out that the evidence is stronger than I initially believed?

(Other aspects of Gorcenski’s argument here really don’t make make sense to me, either. For example, because of 28 nonresponders in a sample of 127 kids, “the responses from these subgroups cannot be relied upon,” full-stop? Whuh? Why? We’re only allowed to reference or to be swayed by perfect studies? But these are more in-the-weeds arguments that I’m going to mostly skip here in favor of focusing on stuff she got straightforwardly, objectively wrong.)

(Oh and it’s a little thing but sometimes you’ll see the number of nonresponders listed as 24, and sometimes as 28. This is just a matter of categorization — “In 4 cases (5.0%), the adolescents and the parents indicated that the GD from the past remitted, but these individuals refused to participate,” Steensma and his colleagues wrote. It’s basically a matter of opinion whether you want to count these patients as true nonresponders, but it doesn’t change the results much anyway.)

Error 2

Continuing with the text that follows, after Gorcenski complains I didn’t change my conclusions:

Indeed, the text immediately after the correction instead attacks several transgender people by name for not debunking the literature that he himself confesses to have misread.

This one is just an outright lie. Here’s the entire correction from that article, as well as the preceding text:

But the researchers explain exactly why they did this: “As the Amsterdam clinic is the only gender identity service in the Netherlands where psychological and medical treatment is offered to adolescents with GD, we assumed that for the 80 adolescents (56 boys and 24 girls), who did not return to the clinic, that their GD had desisted, and that they no longer had a desire for gender reassignment.” This isn’t a bulletproof assumption, of course — maybe some of those patients moved to another country, or something — but every research article involves approximations, and it would be hard to come up with a storyline in which this group had enough persisters in it to nudge the overall numbers all that much. (Update: The truth here is actually a bit more complicated. The researchers were able to get in touch with 56 of the 80 kids who stopped coming to the clinic, or their parents, and obtain enough information to determine whether they were still gender dysphoric. Either zero or one of them qualified as gender dysphoric at followup, depending on the scale used. I’ve posted an in-the-weeds explanation here, but the key takeaway is that it’s accurate to say that 24 lost-to-followup kids were assumed to no longer have dysphoria without the researchers knowing for sure, not 80 as [Julia] Serano and I initially reported — this research is stronger than I initially presented it to be. This was my mistake for not reading the study more carefully.)

Update, 7/25/2021: Of course I should have included the text “immediately after the correction” to show no one is attacked for making this error there either:

Again: Every study that has been conducted on this has found the same thing. At the moment there is strong evidence that even many children with rather severe gender dysphoria will, in the long run, shed it and come to feel comfortable with the bodies they were born with. The critiques of the desistance literature presented by Tannehill, Serano, Olson and Durwood, and others don’t come close to debunking what is a small but rather solid, strikingly consistent body of research.

That said, it’s completely understandable why the concept of desistance makes some trans people as well as some of their allies uncomfortable. A huge part of the challenge of being transgender, after all, is having your identity — your very sense of self — endlessly, exhaustingly critiqued, invalidated, and disregarded by people who couldn’t possibly understand where you’re coming from. In addition to the well-established threats they face in the form of physical violence and legislation aimed at ostracizing them, trans people are constantly dealing with the psychological threat of being told that they aren’t really what they say they are, that they’re just crazy or broken or delusional.

I didn’t attack anyone, let alone “several transgender people by name.” I mentioned another person who made the same error as I explained my own error, and then, in the text immediately after, I reiterated why I disagreed with a number of the critics of the desistance literature. That’s the only point in this excerpt where I even mention multiple people, and it’s in the context of disagreeing with them about desistance, not criticizing them for making the error under discussion. How the hell could I attack someone for making the same error I made? (Update, 7/25/2021: Language in this paragraph updated to include a reference to the text after my correction.)

Error 3

Gorcenski:

Despite this admission, he fails to retract the conclusions he drew from his faulty analysis. Instead, he doubles down on his conclusions and instead commits a common sense fallacy:

It makes perfect sense that the more gender dysphoric a kid is, the more likely they are to maintain regular contact with a gender identity clinic and to seek out its services. The kids who are there because Dad freaked out and overreacted when little — [does a Google search for the most common Dutch boys’ names] — Daan put on Mom’s dress once, but is a happy and healthy and non-dysphoric kid, aren’t going to keep coming.

Here, Singal invents a scenario from whole cloth. There is nothing in the Steensma publication that suggests that any of the study participants were referred to the Amsterdam clinic because they put on Mom's dress once; in fact Singal presents no evidence of the prevalence of this type of intervention for this type of behavior whatsoever. This is instead an appeal to popular misconceptions of transgender lives.

There’s no ‘fallacy’ here. This is, again, a bizarre misinterpretation of what I wrote, and one you can only pull off by selectively quoting my work. Here’s the entire bit in question:

None of the kids who stopped coming to the clinic and who the authors were able to get in touch with had clinically significant gender dysphoria at followup, as judged by one measure (and just one did judged by the other). Zero for 52, or zero for 56 if you include those four partial-responders.

Now, it’s important to note that this group was less dysphoric at intake. Of the total 80 kids in the sample who stopped coming, 39.3% of boys and 58.3% of girls met the criteria for what used to be called Gender Identity Disorder, or GID. That’s way lower than the corresponding percentages for the kids who stayed in touch with the clinic as they grew up — 91.3% and 95.% percent, respectively. This shouldn’t surprise us! It makes perfect sense that the more gender dysphoric a kid is, the more likely they are to maintain regular contact with a gender identity clinic and to seek out its services. The kids who are there because Dad freaked out and overreacted when little — [does a Google search for the most common Dutch boys’ names] — Daan put on Mom’s dress once, but is a happy and healthy and non-dysphoric kid, aren’t going to keep coming.

Gorcenski presents my put-on-a-dress-once scenario — which is obviously just a hypothetical — as some sort of unwarranted, specific claim about this clinical population or the clinic’s practices. But in context, my whole point is that I agree with what some activists have said about the limitations of these studies. I’m saying many of these kids who didn’t come back to the clinic aren’t true ‘desisters’ because they never had genuine gender dysphoria in the first place, as evidenced by the fact that they didn’t meet the threshold for what was then GID.

I cannot even fully understand exactly what Gorcenski is accusing me of here. I mean, I guess it’s technically true that “There is nothing in the Steensma publication that suggests that any of the study participants were referred to the Amsterdam clinic because they put on Mom's dress once” per se, but there’s plenty of evidence a chunk of the clinic’s patients were subthreshold for GID, which likely means some of them were there merely for exhibiting gender nonconforming behavior. And if later on those kids still don’t have gender dysphoria, of course we shouldn’t consider them to be ‘desisters’! What I’m saying makes perfect sense if you include the preceding paragraph. Which, I think, is why Gorcenski cropped this quote the way she did (though I obviously can’t prove that).

Error 4

Gorcenski:

Moreover, neither Singal nor Steensma et al provide any conclusive evidence that gender dysphoria has any such severity scale. Certainly, the DSM-5 includes no severity or degree scale with its diagnostic criteria of Gender Dysphoria; neither ICD-10 nor ICD-11 include a severity scale in their coding. While it might make intuitive sense that some gender dysphoria is "worse" than others, there is no commonly acceptable method for assessing this, and the attempts made in the literature so far have not conclusively determined a means by which gender dysphoria can be so ordered.

This is completely false. There are absolutely established scales to measure the intensity of childhood gender dysphoria. Here’s a bit from the very 2013 study at issue, where the authors explained how they measured “Gender Identity and Gender Dysphoria” among their childhood patients:

The Dutch version of the Gender Identity Interview for Children (GIIC) is a 12-item child informant instrument that measures 2 factors: “cognitive gender confusion” and “affective gender confusion.” Cognitive gender confusion is assessed by 4 questions asking whether the child identifies as a boy or a girl, Affective gender confusion is assessed by means of 8 questions focusing on affective aspects of gender identity (e.g.,“Are there any things that you don’t like about being a boy?”). Higher scores on the GIIC reflect more gender-atypical responses. The Dutch version of the Gender Identity Questionnaire (GIQ) is a 14-item parent-report questionnaire representing 1 factor. The focus of items is on gender-variant behaviors, with higher scores coded in this study to represent a greater frequency of gender-variant behaviors.

I’m sure some people won’t like the ‘confusion’ language (this study is from 2013 and the instruments from earlier), but that’s beside the point. This is proof of exactly what Gorcenski claims is absent. And when you look at the items on the Gender Identity Interview for Children, it’s quite clearly measuring not just ‘cross-sex’ behavior or interests (a common aspect of childhood assessment for GD that’s also present in the DSM’s diagnostic criteria), but also gender dysphoria qua gender dysphoria:

So it’s true, as Gorcenski indicates, that the DSM is concerned with making the binary determination of whether someone does or doesn’t have gender dysphoria (formerly Gender Identity Disorder) rather than assessing its severity. But she’s just completely wrong that there aren’t established scales that measure the severity of GD. If you read the paper closely, this is obvious. (Then again, I can’t be too harsh since this whole thing was sparked by my own failure to read the paper closely enough back in 2016.)

That’s it — that’s all I’m saying on this. Someone should look into the statistics issue but I just wanted to publish as concise a response as possible about the wrongest claims in Gorcenski’s piece. The lead image, of a merry-go-round on which — I can only imagine — the children are forced to answer the same questions over and over and over, comes from Mollie Sivaram via Unsplash.

Update, 7/23/2021: I was pointed back to this post by this nonsense, and I realize there’s actually one more thing I want to address all these months later.

Error 5

Gorcenski writes:

When he wrote on concerns over the safety of puberty blockers in a recent Substack post, he did not report on studies that show that they are safely and effectively used for treating Central Precocious Puberty, such as this study. Instead, he wrote about expert but qualitative concerns over long-term safety of these drugs, without providing scientific reference for such concerns or even acknowledging that a gap in the science exists.

This is, again, just straightforwardly dishonest. I addressed exactly this issue in the article Gorcenski is criticizing, which she naturally doesn’t link to because then people could pull it up and actually read it.

In the relevant section, I first quote another writer, who said in Foreign Policy: “Doctors recommend prescribing a medicine called leuprolide acetate, sold under the brand name Lupron, which has been used to hold off premature puberty, a condition known as ‘central precocious puberty,’ since 1993. As with other puberty blockers, the effects are reversible.”

Then I explain that this doesn’t necessarily tell us anything about the very different use-case of puberty blockers in transgender medicine, and cite some expert concern on this front:

So the evidence for blockers being ‘reversible’ stems mostly from situations in which kids who went on them eventually proceeded to their natal puberty, not in which they took cross-sex hormones and went through a partial version of the ‘other’ puberty (‘partial’ because if you are born male/female, you don’t experience each and every element of a natal female/male puberty when you take cross-sex hormones). And there’s far less evidence, overall, for the safety and reversibility of puberty blockers in this latter use-case. It’s a distinction that matters a great deal.

Bell v Tavistock goes on to lay out some evidence that blockers shouldn’t be presented as reversible in an unequivocal manner. For example, it quotes testimony provided to the court by Annelou de Vries, a leading Dutch clinician, who wrote, “Ethical dilemmas continue to exist around … the uncertainty of apparent long-term physical consequences of puberty blocking on bone density, fertility, brain development and surgical options.” (Fertility is an excellent example of how the drugs’ ‘reversibility’ hinges entirely on what comes next, natal puberty or cross-sex hormones. If you take puberty blockers and go on to your natural natal puberty, you’ll likely be fertile, while if you instead proceed to cross-sex hormones, you likely won’t be.)

What is the point of any of this — of writing, thinking, debating, critiquing — if we can have a situation like this where Person A says “Here’s why the fact that there’s some evidence for the long-term safety and reversibility of puberty blockers in precocious-puberty contexts doesn’t apply to their use in transgender medicine,” and Person B offers a rebuttal of, “Person A ignores the fact that there’s some evidence for the long-term safety and reversibility of puberty blockers in precocious-uberty contexts”?

This is so stupid, so demoralizing, and such a waste of time. Now I’m done.

I can't even imagine having to clarify yourself this many times. I'm sorry, Jesse. I love your writing, but for your sake, hopefully we can see more stuff focused on your book...and YA Twitter.

There are some "he said/she said" back and forths between journalists where I can see both sides. But the persistent misreadings of you and your work are so blatantly wrong that I can't fathom any other explanation than that these people are operating in extreme bad faith. I know they're worried about trans people and kids, I get that. But using tactics like this leave me with little sympathy for their lies about you.